Ai Testing

ratl.ai/studio

(2024-2025)

Lead the full design experience for ratl.ai, from concept to interface, as a seamless, visually immersive,

and engaging product

SaaS

Web

B2B

Role

Lead Designer

Services

Ux Design | Visual Design | Design system | Product Testing

Context

ratl.ai is a next-generation platform that empowers engineering teams with autonomous AI agents to handle software testing workflows from API tests, functional and UI tests, to load testing. The aim: reduce manual effort, accelerate releases, and build confidence in continuous delivery

Problem

Traditional quality engineering is slow, manual, and brittle:

Test suites often rely on handcrafted scripts or collections (e.g., Postman), which require ongoing maintenance whenever APIs evolve.

Regression cycles take days or weeks, slowing releases and increasing risk.

Engineering teams waste time writing, updating, and debugging tests instead of building features.

Wider test coverage (load, E2E, accessibility, web) requires multiple tools and specialized engineers.

The result? Slow certification cycles, delayed releases, and confidence gaps in production readiness.

This complexity often delays releases and frustrates both developers and QA engineers

My role

For this project, I collaborated closely with the core team. I led the design process from research, ideation, testing to final handoff. We maintained regular feedback loops to align on goals, address challenges and deliver a product that met the user needs

KPIs and Targets

To quantify impact, ratl.ai testing initiatives track:

Test Efficiency

Time to generate and run regression suite target: ≤10 minutes

Human effort hours saved per run target: ≥80% reduction

Test Coverage

API endpoints covered target: 100% of spec endpoints

Automated negative and edge cases generated target: ≥90% coverage

Release Impact

Regression cycle time reduction

Number of defects escaping into production

Feedback loop time between dev → test → fix

These metrics help teams measure both operational and quality improvements.

Approach

1. Context & Onboarding

Teams begin by feeding ratl.ai their API surfaces (OpenAPI, collections, or direct request definitions) and connecting existing SDLC tools. The system assesses context and readiness.

2. Autonomous Test Generation

ratl.ai parses API definitions and automatically produces a full suite of test cases including positive, negative, validation, and boundary conditions without human input.

3. Execution & Monitoring

Test runs are triggered via CI/CD. Agents execute tests, monitor results in real time, and log detailed outputs. Dashboards show pass/fail status and trends.

4. Reporting & Feedback

Engineers receive actionable summaries, detailed logs, and easy-to-digest results that feed directly into release decisions and bug tracking systems (Slack, Jira, etc.).

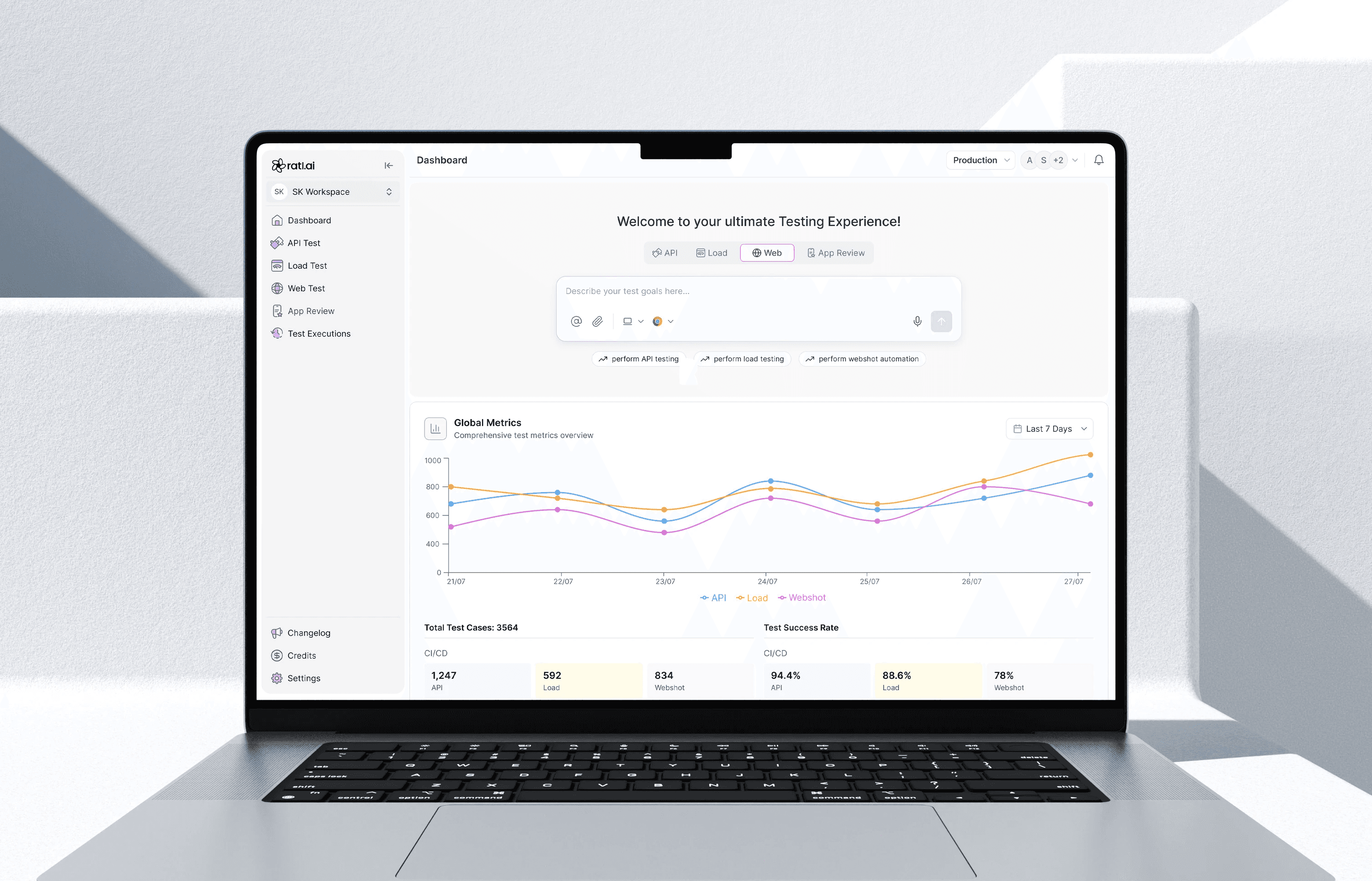

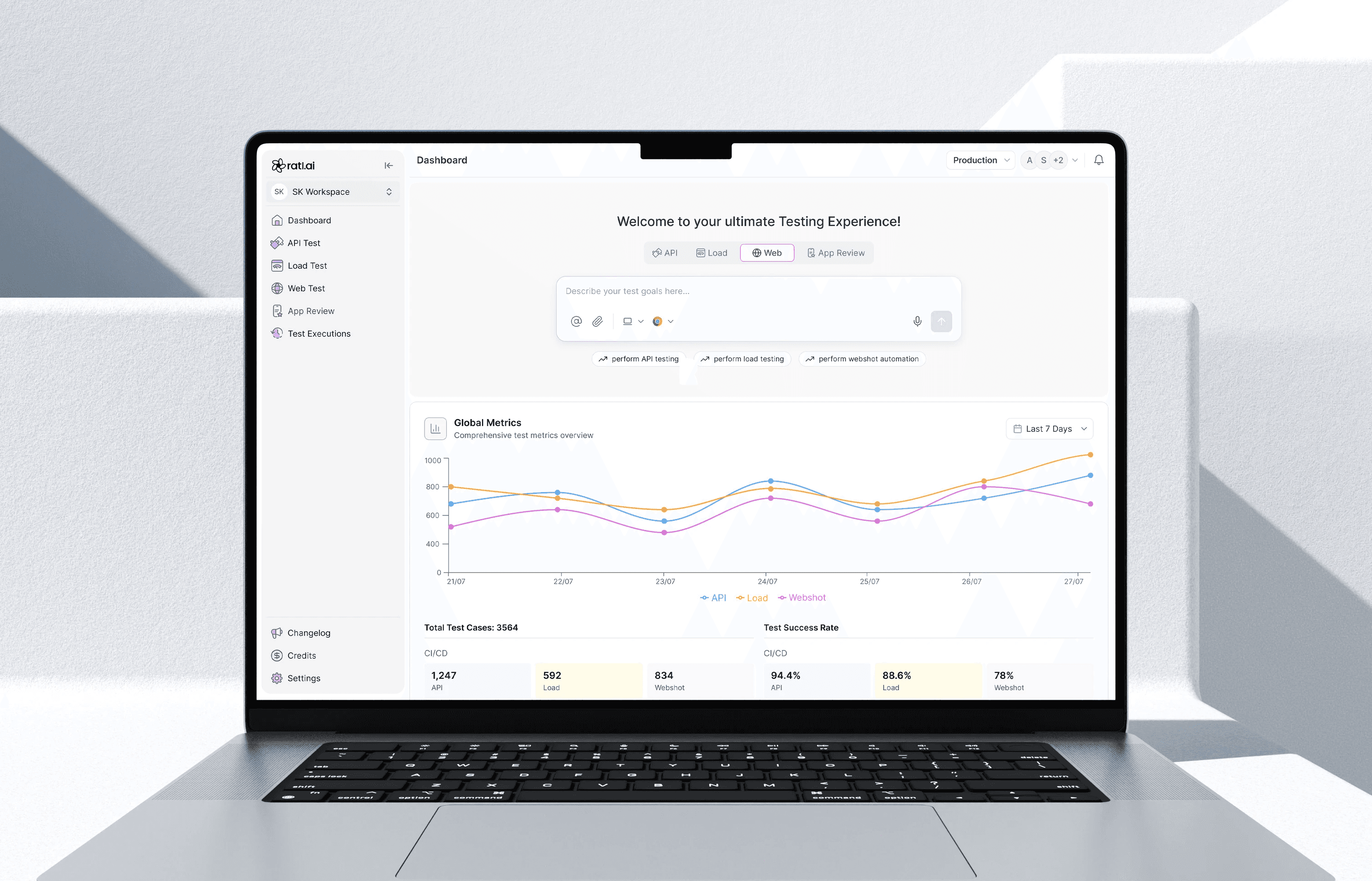

Manage everything in one place

I proposed a centralised dashboard that consolidates all testing functionalities into one cohesive space, reducing complexity and enabling users to control their entire workflow effortlessly from one place

/Studio

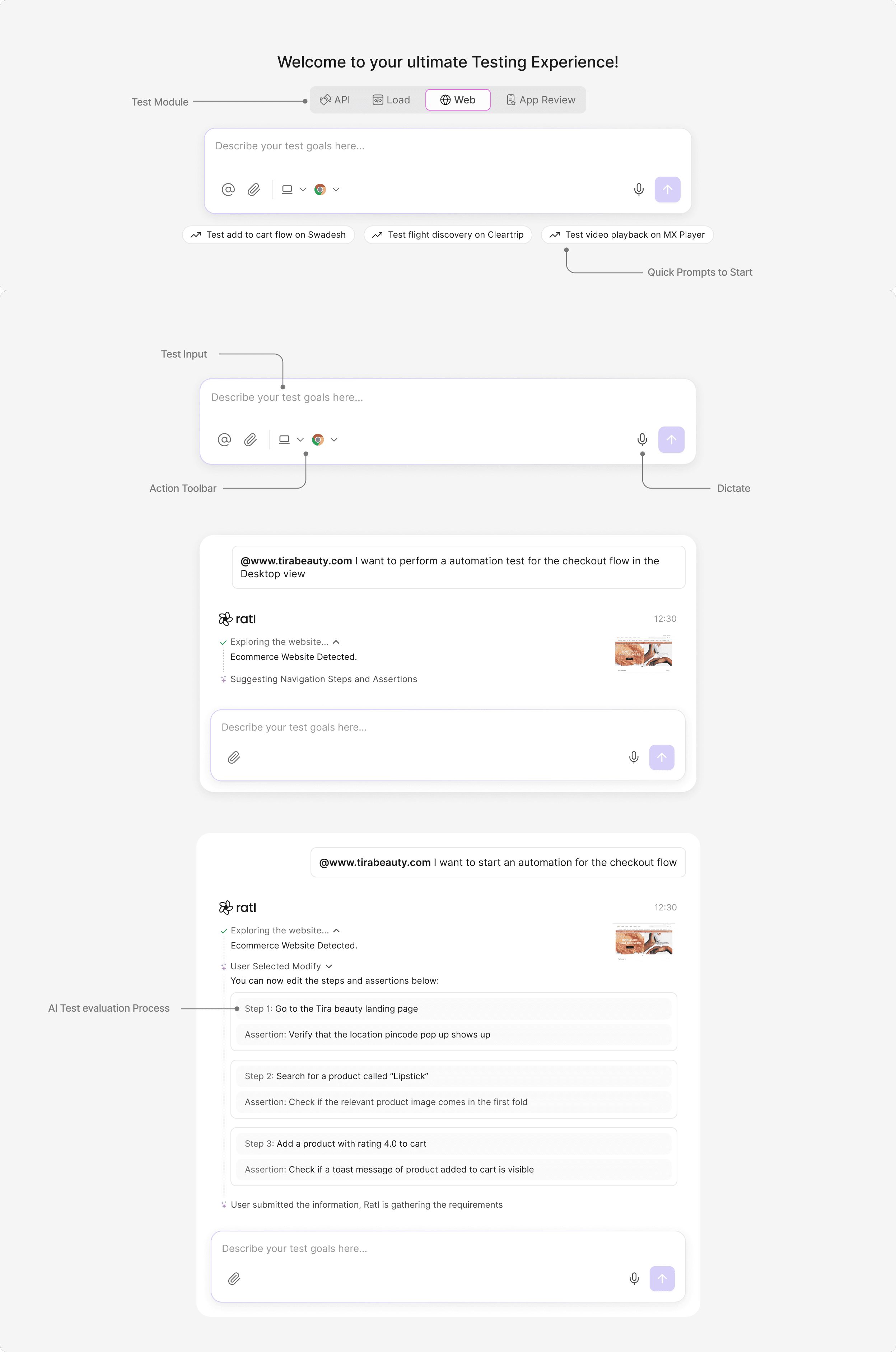

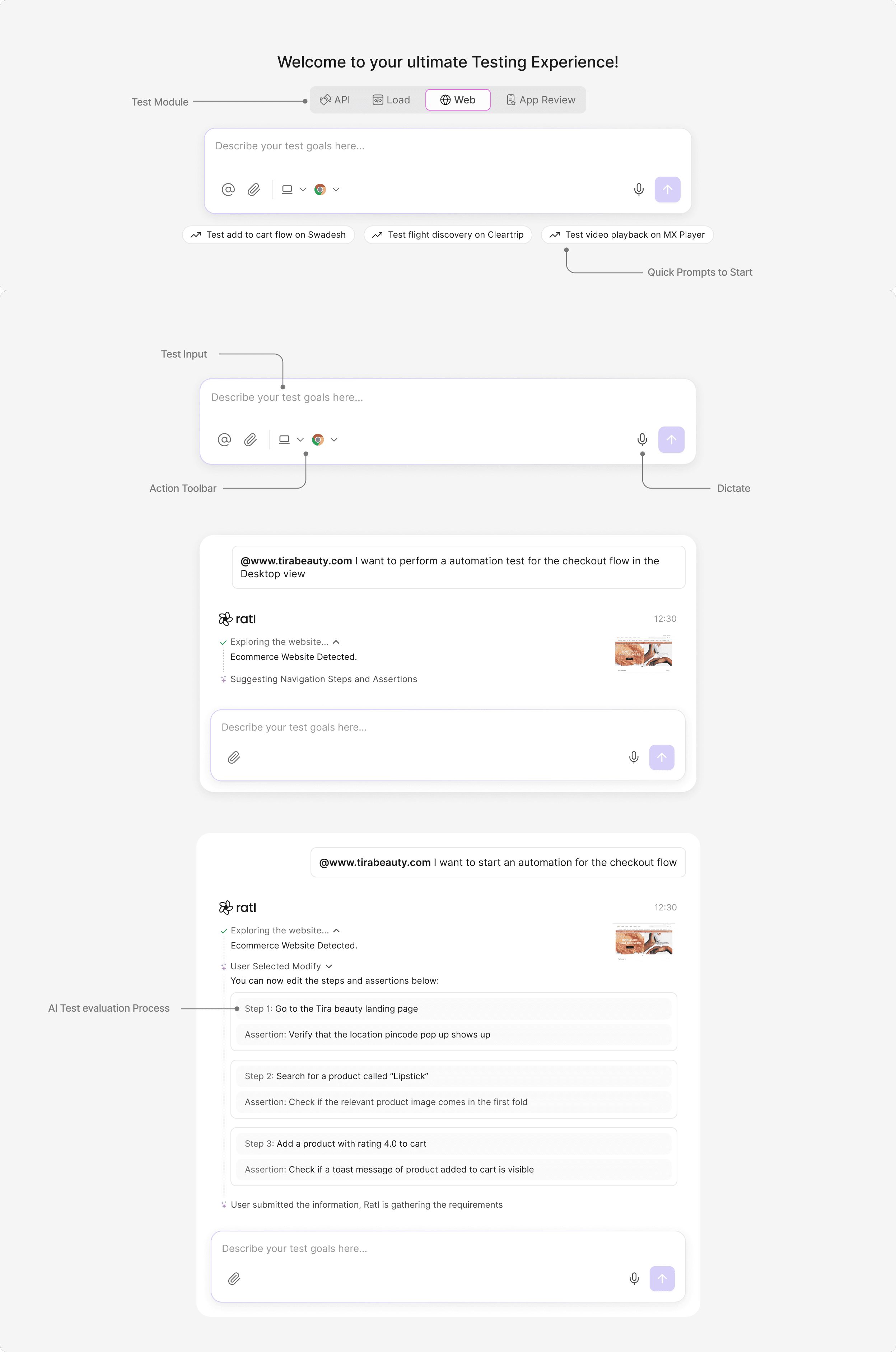

Visualizing the Testing Journey

The goal was to build a space where users could observe automation in real time understanding not just the outcome of a test, but the journey of how it reached that outcome. This interface bridges the gap between technical automation and visual clarity, empowering QA engineers and non-technical users alike to collaborate and debug efficiently

Streamlined Monitoring for Testing Cycles

The goal was to simplify the process of tracking, reviewing, and managing test results. By centralising all execution data, users can quickly evaluate test outcomes, monitor ongoing runs, and identify potential issues without navigating through multiple screens

Active Users in Workspace

The goal of this section is to make workspace activity more visible and accessible, reducing communication gaps and encouraging a more connected workflow

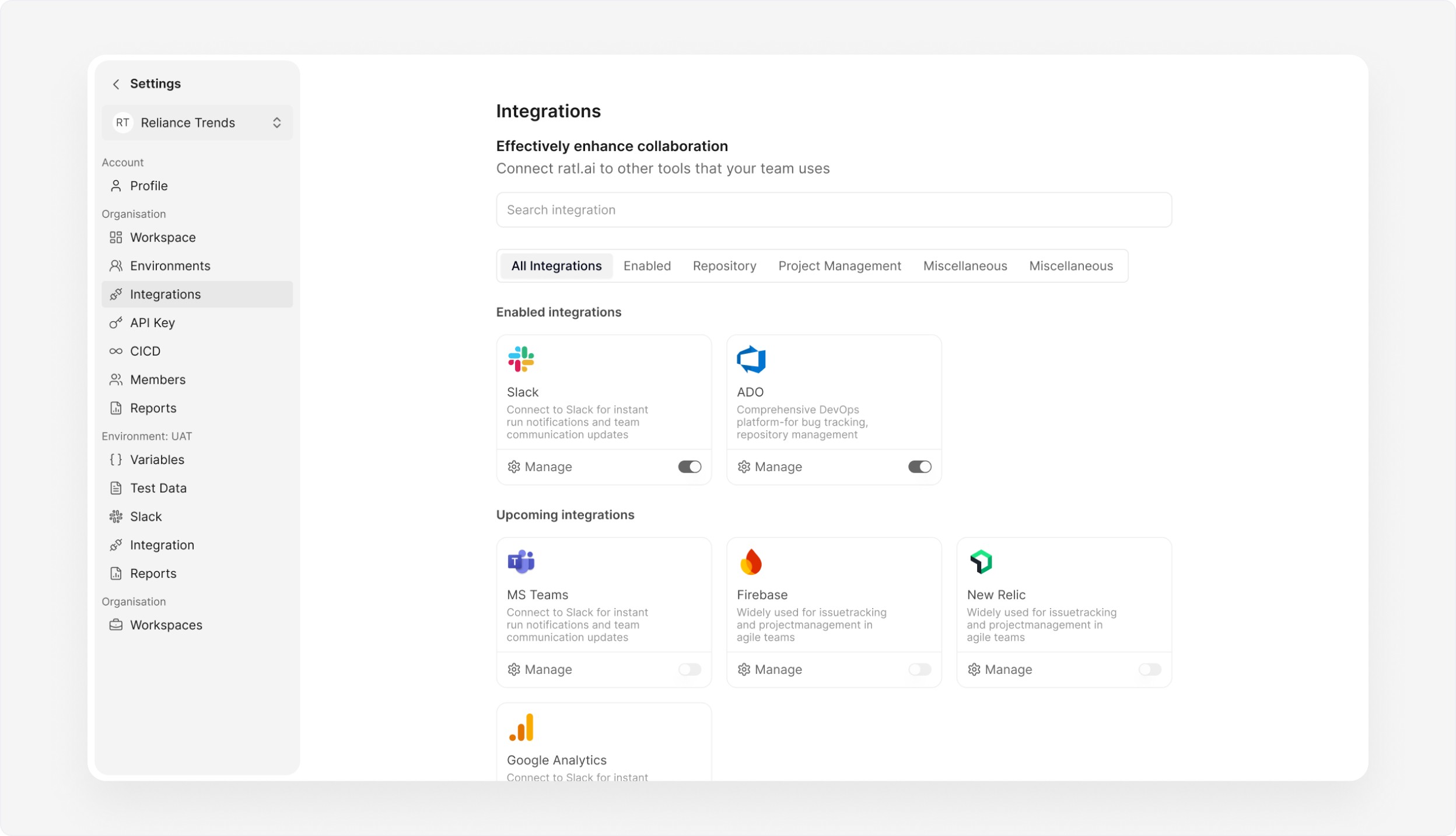

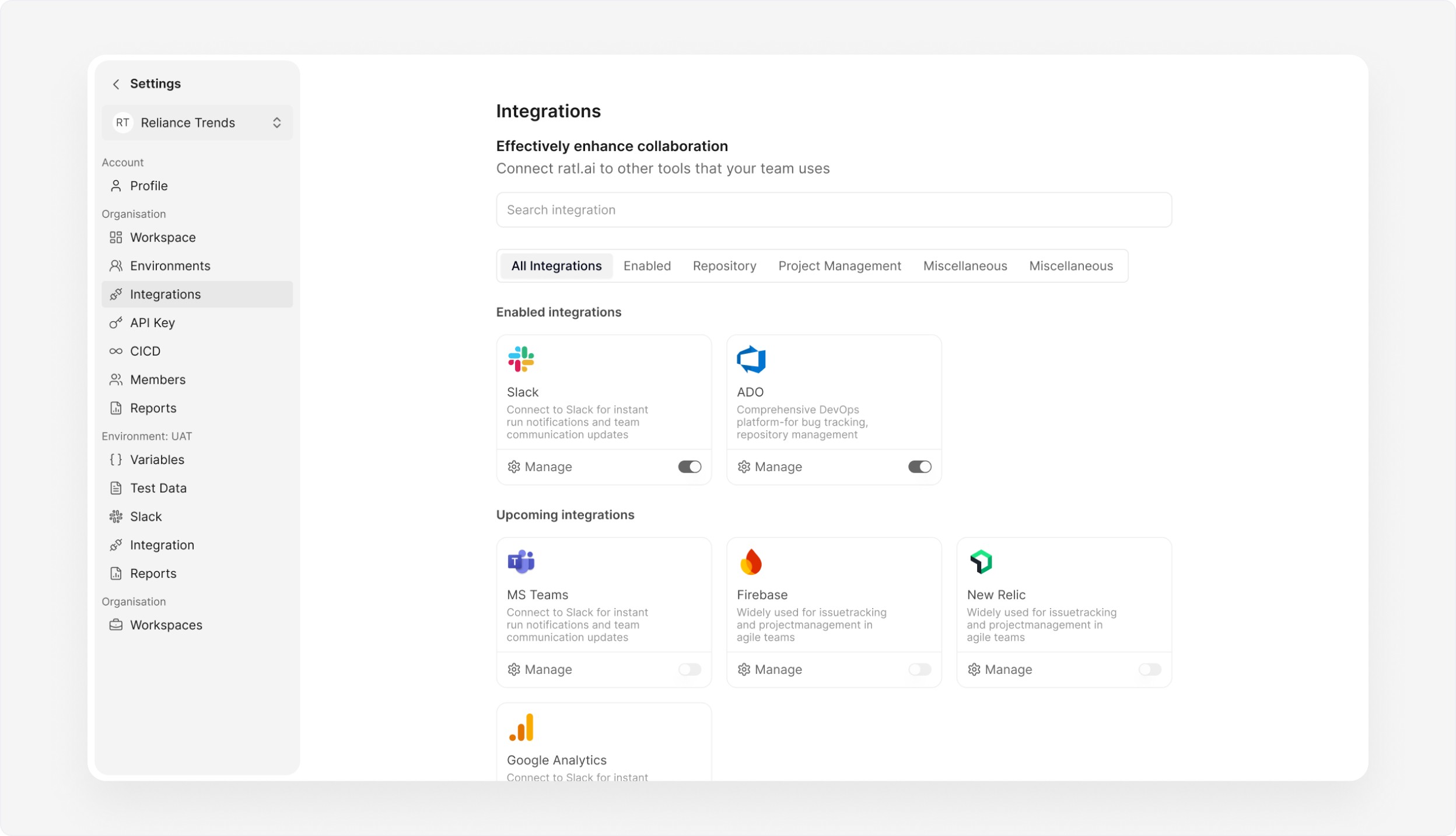

Integrations- Streamlining Collaboration

The goal was to simplify setup, reduce context switching, and create a unified experience where users could manage notifications, track progress, and communicate seamlessly

Design System

A lightweight design system with shared variables was maintained to ensure visual and interaction consistency as ratl.ai scaled. Core foundations like colors, typography, spacing, and status states (pass, fail, running, skipped) were defined as reusable variables, allowing components to adapt across different testing workflows without duplication. This approach enabled faster iteration, reduced design drift, and ensured that new features could be shipped without breaking existing patterns.

My focus was to balance power

with simplicity, ensuring advanced capabilities never overwhelmed the user while remaining easy to

discover and use

Read Next

Ai Testing

ratl.ai/studio

(2024-2025)

Lead the full design experience for ratl.ai, from concept to interface, as a seamless, visually immersive,

and engaging product

SaaS

Web

B2B

Role

Lead Designer

Services

Ux Design | Visual Design | Design system | Product Testing

Context

ratl.ai is a next-generation platform that empowers engineering teams with autonomous AI agents to handle software testing workflows from API tests, functional and UI tests, to load testing. The aim: reduce manual effort, accelerate releases, and build confidence in continuous delivery

Problem

Traditional quality engineering is slow, manual, and brittle:

Test suites often rely on handcrafted scripts or collections (e.g., Postman), which require ongoing maintenance whenever APIs evolve.

Regression cycles take days or weeks, slowing releases and increasing risk.

Engineering teams waste time writing, updating, and debugging tests instead of building features.

Wider test coverage (load, E2E, accessibility, web) requires multiple tools and specialized engineers.

The result? Slow certification cycles, delayed releases, and confidence gaps in production readiness.

This complexity often delays releases and frustrates both developers and QA engineers

My role

For this project, I collaborated closely with the core team. I led the design process from research, ideation, testing to final handoff. We maintained regular feedback loops to align on goals, address challenges and deliver a product that met the user needs

KPIs and Targets

To quantify impact, ratl.ai testing initiatives track:

Test Efficiency

Time to generate and run regression suite target: ≤10 minutes

Human effort hours saved per run target: ≥80% reduction

Test Coverage

API endpoints covered target: 100% of spec endpoints

Automated negative and edge cases generated target: ≥90% coverage

Release Impact

Regression cycle time reduction

Number of defects escaping into production

Feedback loop time between dev → test → fix

These metrics help teams measure both operational and quality improvements.

Approach

1. Context & Onboarding

Teams begin by feeding ratl.ai their API surfaces (OpenAPI, collections, or direct request definitions) and connecting existing SDLC tools. The system assesses context and readiness.

2. Autonomous Test Generation

ratl.ai parses API definitions and automatically produces a full suite of test cases including positive, negative, validation, and boundary conditions without human input.

3. Execution & Monitoring

Test runs are triggered via CI/CD. Agents execute tests, monitor results in real time, and log detailed outputs. Dashboards show pass/fail status and trends.

4. Reporting & Feedback

Engineers receive actionable summaries, detailed logs, and easy-to-digest results that feed directly into release decisions and bug tracking systems (Slack, Jira, etc.).

Manage everything in one place

I proposed a centralised dashboard that consolidates all testing functionalities into one cohesive space, reducing complexity and enabling users to control their entire workflow effortlessly from one place

/Studio

Visualizing the Testing Journey

The goal was to build a space where users could observe automation in real time understanding not just the outcome of a test, but the journey of how it reached that outcome. This interface bridges the gap between technical automation and visual clarity, empowering QA engineers and non-technical users alike to collaborate and debug efficiently

Streamlined Monitoring for Testing Cycles

The goal was to simplify the process of tracking, reviewing, and managing test results. By centralising all execution data, users can quickly evaluate test outcomes, monitor ongoing runs, and identify potential issues without navigating through multiple screens

Active Users in Workspace

The goal of this section is to make workspace activity more visible and accessible, reducing communication gaps and encouraging a more connected workflow

Integrations- Streamlining Collaboration

The goal was to simplify setup, reduce context switching, and create a unified experience where users could manage notifications, track progress, and communicate seamlessly

Design System

A lightweight design system with shared variables was maintained to ensure visual and interaction consistency as ratl.ai scaled. Core foundations like colors, typography, spacing, and status states (pass, fail, running, skipped) were defined as reusable variables, allowing components to adapt across different testing workflows without duplication. This approach enabled faster iteration, reduced design drift, and ensured that new features could be shipped without breaking existing patterns.

My focus was to balance power with simplicity, ensuring advanced capabilities never overwhelmed the user while remaining easy to discover and use

Read Next